- Cisco Community

- Technology and Support

- Security

- Security Knowledge Base

- Kenna API Documentation Updates as of Aug 23, 2022

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on 05-18-2023 10:21 AM

Greetings. It has been a while since my last blog, but I have been focused on improving the Kenna API documentation. Instead of presenting the code, I wrote this blog will cover some of the Kenna API documentation updates. Of course, there will be some code presented. What is a developer's blog without some code, eh?

Resources Section

Let's start with the Resources section. It was added because I found it difficult to find my previous written blogs. This section includes a list of API blogs, videos about Kenna APIs, and Kenna (now Cisco) API webinars. The list of blogs includes the publish date and what APIs were discussed in the blog, if any. Please check out this section for guidance.

New Inference Location

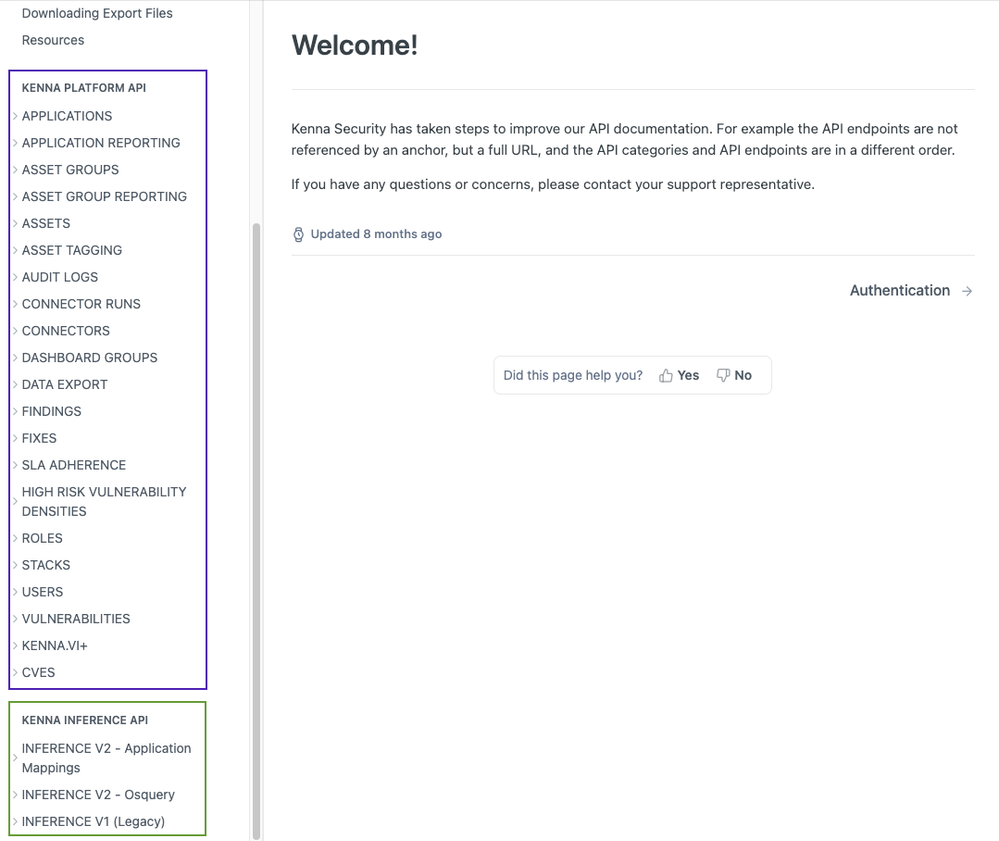

You may have noticed that the "Inference" APIs have been moved out of the "Kenna Platform API" section and into their own "Kenna Inference API" section. This was done because the Inference code and thus the API documentation is based is a different repository. Note also that there is new version v2 APIs, which include application mappings and OS query. At this time, the Inference version v1 APIs are still available.

As you can see below, the "Kenna Platform API" set is in the purple rectangle, while the "Inference API" set is in the green rectangle.

Postman Updates

With the new "Inference" section, a new alternative to Kenna_API_postman_collection.json was added. The alternative is two OpenAPI specification files:

These specification files are directly created from API documentation in the source code; therefore, these specification files will be updated more frequently as compared to the collection file.

The procedure to create Postman collections is similar to the procedure mentioned in "Kenna Security API Postman Collection". README.md has been updated to reflect the addition these changes.

Users external_id Field

The external_id field for users is available in the UI, and now it is available to set as a body parameter in "Create Users" and "Update Users." The APIs "List Users" and "Show Users" are now document external_id in the response. This field is pass-through field, so it can be any string you want it to be like an employee ID number, or an LDAP identity provider.

Custom Field Usage

As you might recall, my last blog covered custom field usage. Along with the blog, the API documentation was updated for custom_fields. Unfortunately, dynamic query parameters are not supported in our documentation framework, so testing custom_fields has to be performed outside of the API documentation framework.

Here is some code from: search_custom_fileds.py:

# Set up the search vulnerability endpoing URL.

page_size_query = f"per_page={page_size}"

custom_field_query = f"custom_fields:{search_custom_field}[]={search_value}"

search_url = f"{base_url}vulnerabilities/search?{page_size_query}&{custom_field_query}"

print(f"URL: {search_url}")

Data Exports Updates

First off, a Download Export Files section has been added. This section discusses how to download export files using curl and python. Again, the documentation framework doesn't support file download, so you will have to do data exports outside the documentation framework if you want to keep the gzipped file.

Second, "Request Data Export" has been updated to include pointers to search fields that can be used for each model: assets, fixes, and vulnerabilities. These search fields are added as query parameters.

Third and final, "Check Data Export Status" now includes all the messages that the API returns. Also HTTP status code 206 was added as a response. This status code response informs us that the export is not ready. It could be because the export is "enqueued" or "processing". Here is a code example from list_custom_fields.py:

# Get the export status. Return True when the correct phrase is returned.

def get_export_status(base_url, headers, search_id):

check_status_url = f"{base_url}/data_exports/status?search_id={search_id}"

# Check the export status.

response = requests.get(check_status_url, headers=headers)

if response.status_code == 206:

return False

if response.status_code != 200:

process_http_error(f"Check Data Export Status API Error", response, check_status_url)

sys.exit(1)

resp_json = response.json()

return True if resp_json['message'] == "Export ready for download" else False

Note that this example just returns False if the status code is 206. It doesn't print any message; in your code, you're welcome to. As an extra step, when the status code 200 is returned, the message is verified to be "Export ready for download."

Vulnerability fields Query Parameter

Upon doing some code archaeology, it was discovered that the "Search Vulnerabilities" API has a fields query parameter. It is comma separated list of fields to be returned in the "Search Vulnerabilities" API response. (Note: This field is not supported in vulnerability exports). For example, the following code snippet:

url = "https://api.kennasecurity.com/vulnerabilities/search"

query_params = f"q=vulnerability_score:>50&fields=id,status,easily_exploitable,risk_meter_score"

search_url = url + "?" + query_params

response = requests.get(search_url, headers=headers)

Produces the following JSON output:

[

{

"easily_exploitable": false,

"id": 3618386756,

"risk_meter_score": 100,

"status": "open"

},

{

"easily_exploitable": false,

"id": 3618386715,

"risk_meter_score": 84,

"status": "open"

},

{

"easily_exploitable": false,

"id": 3618386399,

"risk_meter_score": 76,

"status": "open"

},

{

"easily_exploitable": false,

"id": 3618386393,

"risk_meter_score": 100,

"status": "open"

}

]As you can see, only the fields, id, status, easily_exploitable, and risk_meter_score were returned thus reducing the amount of data returned.

Conclusion

I hope this has been informative and you'll be able to use this information in your developing your code.

Until next time,

API Evangelist

This blog was originally written for Kenna Security, which has been acquired by Cisco Systems.

Learn more about Cisco Vulnerability Management.

Find answers to your questions by entering keywords or phrases in the Search bar above. New here? Use these resources to familiarize yourself with the community: